A first look at Safecont content quality analysis SEO tool

The most vigorous software for quality content and SEO

Safecont was an online SEO tool from Data elasticity S.L in Spain, that analyses your website content and highlights where you should consider improving the content.

Google is updating their core algorithms on what appears to be a near daily basis. The old tricks of several years ago no longer work and any new exploits found are not likely to be around long enough to be an ongoing strategy. So finally Google is delivering what it has been talking about for many years — quality results for the questions that users are asking of it. Since 2014 Google’s Search Quality Guidelines have told us that E.A.T (Expertise, Authoritativeness, Trustworthiness) is important but we have not really seen this in action until 2018. Everyone talks about great content and that’s where EAT comes into play. But how do we measure and audit that content?

Enter Safecont

Safecont summarises mathematical calculations to make reviewing content easier whilst adapting to the new era of Artificial Intelligence in SEO. Safecont markets itself as “The most vigorous software for quality content and SEO” — that is a big claim — let’s see!

Content Audits

Technical Audits can unearth issues with the code that can be fixed and fine-tuned but that has not been quite so easy with the content itself — there are few tools that do content audits. One of the biggest problems with websites is the quality of its content as it is so diverse from site to site and language is a bit more complicated than code! As we know from Google’s own Search Quality Evaluator Guidelines that Expertise, Authoritativeness and Trustworthiness (E-A-T) is important. Page quality ratings depend on the intent and purpose of the content. Safecon analyses the quality of all the sites content and allows you to focus on the issues it finds. This is great for organising improvements to that content — especially your evergreen items.

Pandarisk

The analysis includes an overall site PandaRisk assessment — at page level, this is called PageRisk — which is a content quality check. Thin content is particularly a problem on e-commerce sites especially where 3rd party products are sold. If the products are your own then you have every reason to write great content about them but if you are buying in products and selling them on you might be tempted to copy content (including images) from the manufacturer’s website or brochure, or write very little if you have a lot of products on sale. Either way, there will be competitors willing to spend more time writing good content and they are likely to get the high SERPs and not you.

Three elements make up PandaRisk:

-

Similarity

-

Thin content

-

External duplication

The higher your score the higher your Panda risk will be. Add into this equation the Page Strength that is based on the relevance of the content within the structure of the site and you get four scores for each page on your site: -

Page Risk (The Panda Risk for each page)

-

Page strength

-

Similarity

-

External Duplication

These allow you to focus in on where to prioritise rewriting tasks.

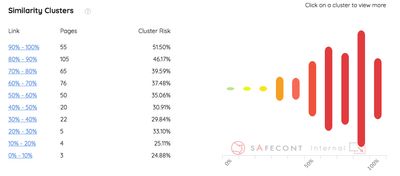

Clusters

The clusters report shows the risk of groups of content that are similar in thier composition including external content. External Duplicate is an interesting one. The site I tested on had none — and that was to be expected as they had gone to a lot of effort to create unique content for the site even though they resell other manufactures products alongside their own, so I cannot report on how effective this is.

Similarity

The Similarity Report initially looks exactly the same as the Cluster report but this is for internal content. A lot of sites will have duplicated elements such as menus and footers and the thinner the main content on the page the more the common content will be a factor — another reason not to have thin pages! I can imagine that sites with duplicated content because of URL structures will suffer heavily from this and the site I have been auditing has URLs with and without a trailing slash creating duplications — hence the number of pages flagged in this report.

Any pages that are listed as similar will be diluting the potential for any of those pages to rank well. You can use this to prune your site or rewrite those pages that are cannibalising each other — though I can imagine with a lot of similar products this is going to be a challenge!

External Duplicate

I’m not sure where Safecont gets it’s external data from for this but it could be just testing the top ranking sites for terms yours is competing with. Either way, this is an impressively useful indicator of how unique the content on your site is.

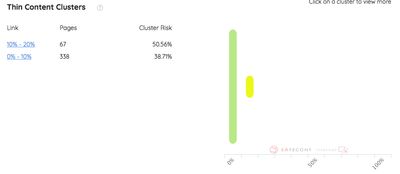

Thin Content

The Thin Content report highlights pages without enough content on them to make them effective. The e-commerce site I tested has added a lot of good content to their product pages and ranks well — this report will be one of the most useful to most sites.

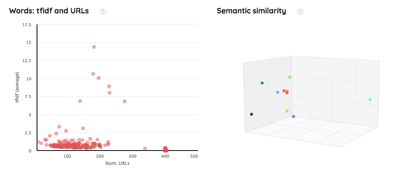

Semantic

The Semantic report has an interesting graph showing the spread of TF-IDF and the number of URLs using that word — a hot topic in Europe but underused in North America apparently. This is great to see where the outriders are for your topic keywords. The better your site is balanced with topics the stronger it will be in the SERPs which should mean more traffic! Also in the report is the Clustering section. This is an automated way of grouping URLs together based on the content contained. I believe a lot more analysis would need to be done to understand how useful this is but in theory for some sites it could make a massive difference — even if its just getting the business to understand and govern the content.

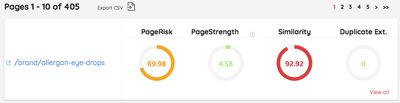

Pages

The Pages report provides a simple page by page report showing Page Risk, Page Strength, Similarity and External Duplication that can be downloaded as a CSV for creating a priority spreadsheet.

Architecture

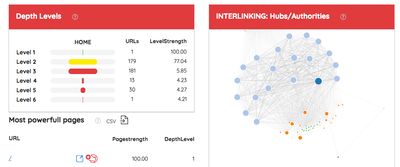

Safeconf also has an architecture report that shows how the site structure is linked together, using a trendy visualisation library. As nice as this looks it does not really give you a lot of information. The depth levels section though can show you where your level strength is weak and that is very useful.

Summary

This is the first iteration of this online tool so there are a few rough edges but I am sure they will go in good time as the service gets even better. The site is in Spanish but has the option to view in English and most of it is a very good translation allowing you to test and make decisions based on the reports. When first using the tool it is quite confusing as a lot of the reports look similar but there are helpful clickable ? marks that provide explanations for most topics.

Apparently, the AI they use in order to analyze 100,000 URLs makes 1015 operations, which is more or less the equivalent of 1,000,000,000,000,000,000 mathematical calculations. Difficult to replicate that yourself!

I have seen similar systems in the past but they have been aimed at corporate governance and were very expensive. Safecont lowers the entry cost to decent content analysis. I believe this is a very useful tool, especially for content auditing larger sites with a lot of content allowing you to focus on improving the evergreen content. Well worth a look!

And there is more...

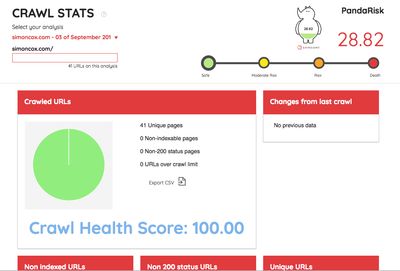

Crawl Stats update on the 3rd September 2018.

Safecont has introduced Crawl Stats showing the number of URLs tested and the health of the site.

There is also an Export CSV function in the Crawl Stats allowing you to download the raw data. This includes the scores from each section and is really useful for prioritising your focus on areas that need attention. The following image shows the scores from this site ordered by PageRank.

1st September 2022 Safecont appears to have gone - the domain is no longer responding so I have removed the links to it.

Next post: A scratch-built rail-bus for the Ding Dong Moor Railway

Previous post: How to find insecure pages in your site before Google start penalising